Meet the Enemy:

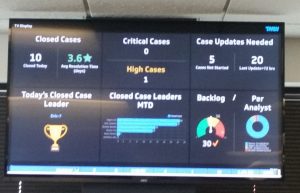

Sure, it looks all innocent and helpful. Just sitting there informing you of your business activities. But all too often I’ve seen this hideous monster ruin a SQL Server.

Let’s take a moment and talk about why this TV is our enemy. Then we can follow up with a few ways to fix the problem.

It’s becoming more and more commonplace to place these monitors around an office. It’s becoming so common in my industry, transportation, that when I get a call saying that system performance is falling off suddenly I have learned to ask about any new TVs early in my call.

What I often find is the TV in the corner, or perhaps there are several of them, running a series of SSRS reports 2 or 3 times per minute in a slideshow. That, in and of itself, isn’t necessarily an issue. It becomes an issue if those reports are too big and run in a constant loop, especially when they are running against the production OLTP server.

I saw one once where the report was running 2x per minute according to the slideshow and took over 50 seconds to execute. When I got on the SQL Server it was trying to run 7 of these giant reports simultaneously. It was no wonder the ERP software was running super slowly.

If this sounds like something you’re experiencing or if you’re considering adding a dashboard monitor to your office please read on as we talk about 4 methods to end this madness!

Method 1: Tune your reports so that they run faster and use less resources.

This one may seem super obvious, but in my experience there is usually something to be gained from a tuning effort. When your query is running upwards of 2000x per day even a second or 2 saved can be significant.

Method 2: Snapshot difficult calculations

I saw a dashboard report once that was based on quarterly revenue. Once per minute, every minute, this report was adding up all invoices for an entire 3 month period and comparing it to a goal. Did that need to be calculated every minute? What if a separate SQL Server Agent job performed that calculation once every hour or maybe even just once every day and stored the results in a table. Then the report could simply read that value from a table instead of adding up thousands of individual invoices every minute.

Method 3: Point your reports at another copy of the database.

This one can be difficult and/or expensive if you need up-to-the-minute reporting, but can be worth that cost if it saves productivity on the production system.

If the data doesn’t need to be up to the minute on some reports then maybe a daily or hourly copy of production data using Transaction Log Shipping would work. If the data need to be closer to real time then Transactional Replication or an AlwaysOn Readable Secondary Replica may be appropriate.

Method 4: Use SSRS snapshots

This method is my favorite and so I’ve saved it for last. There is a feature built into SSRS that is fast, free, easy, and very useful. It allows SSRS to save query results for a predetermined amount of time then reuse those results on a subsequent report requests. This becomes very valuable if you can afford to update a report less often, but still want it to scroll on the screen more often.

The setting tells SSRS to save any database query results that are generated in a cache. Any subsequent report requests will use that same cached result set and will NOT requery SQL Server. This will continue until the saved results in the cache are expired.

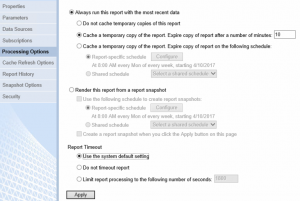

In the ReportManager UI click the drop down arrow next to the report you wish to cache and and choose “Manage”. On the next screen choose “Processing Options”. You screen will look something like this. The default selection is “Do not cache temporary copies of this report”. Switch to “Cache a temporary copy…” and select a number of minutes to save the cache. The default is 30 minutes.

In this example the query results will be cached on the first execution and then expire after 10 minutes. Once expired they will not be regenerated until the next report request. Since we are running in a repeating loop, that will mean only a moment later.

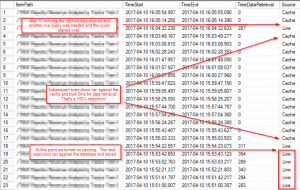

You can see the performance improvement using the native execution log that SSRS generates.

*This is shown in reverse chronological order so please read from the bottom up.*

Notice that the source was live and the query time was approximately 300ms on each execution until the setting was enabled. Notice then that the query execution time is zero for all 14 executions between when the results are generated and when they expire, 10 minutes later. With the cached data having expired, the 15th execution must query SQL Server and start the process over again.

***Please note that the cached results can only be used when the report is called with the exact same parameters. Any changes in parameters will cause a new Live query and a new cached report.***

Conclusion

Here is the best part – you can combine any or all of these methods to further reduce load. The best solutions I’ve seen to this issue involve a combination of the methods.

I recall one specific scenario where system performance had become terrible shortly after rolling out a TV in the dispatch center and filling it with useful stats. There was a large report that showed on the screen one time every minute. We were able to reduce the execution time of the report by 90% through tuning efforts and turned on SSRS snapshots for 10 minutes of reuse meaning the report only had to call the data query once every 10 minutes instead of 10x every 10 minutes. The end result was a 99% reduction in the effort by the SQL Server without sacrificing any meaningful data on the screen. The users of the production ERP system reported that things returned to normal shortly thereafter. I think I made a friend that day.

Good Day, happy that i stumble on this in bing. Thanks!